1. The Big Picture

text

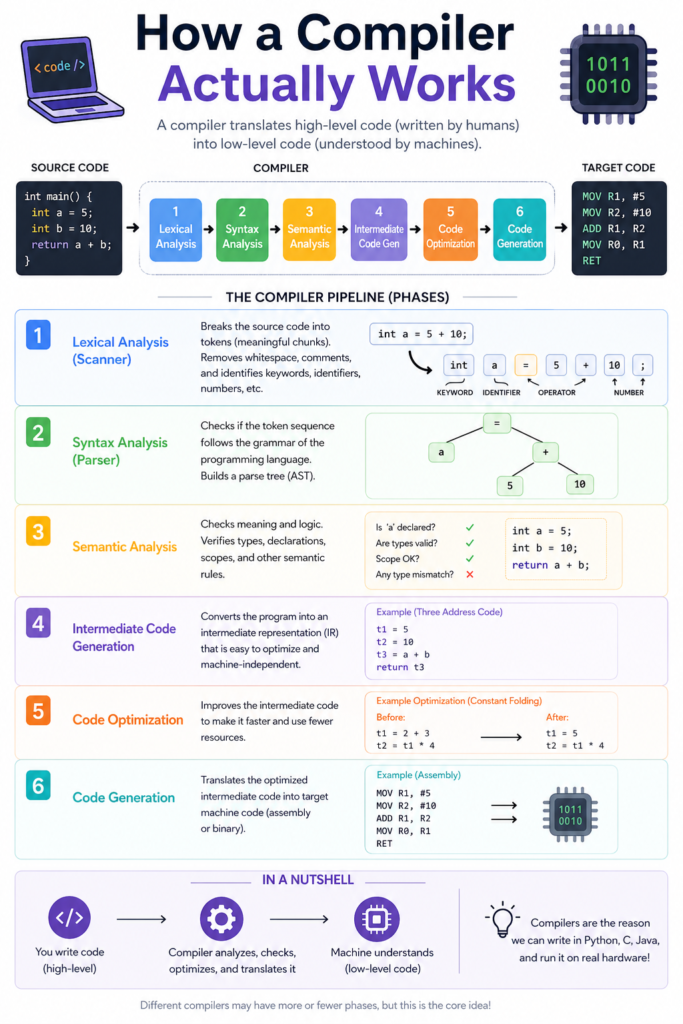

SOURCE CODE MACHINE CODE (hello.c) → [ COMPILER ] → (hello.exe / a.out)

A compiler is a translator from a high-level language (C, C++, Rust, etc.) to low-level machine instructions (binary) that a CPU can execute.

But internally, it’s not a single step — it’s a pipeline of phases.

2. The Six Major Phases (With Visuals)

Let’s take a tiny C program:

c

int add(int a, int b) {

return a + b;

}

Phase 1: Lexical Analysis (Tokenization)

Input: stream of characters

Output: tokens (atoms like keywords, identifiers, operators, literals)

text

characters: i n t a d d ( i n t a , i n t b ) tokens: KEYWORD(int) IDENT(add) LPAREN KEYWORD(int) IDENT(a) COMMA KEYWORD(int) IDENT(b) RPAREN LBRACE RETURN IDENT(a) PLUS IDENT(b) SEMICOLON RBRACE

Visual:"int" → [INT]"add" → [ID]"(" → [LPAREN]

…

Phase 2: Syntax Analysis (Parsing)

Tokens → Abstract Syntax Tree (AST) following grammar rules.

text

return_stmt

/ \

PLUS binary_op? no, just a+b

/ \

ID(a) ID(b)

Better visualized as:

text

function_definition

/ | \

type: int name: add body (block)

|

return_stmt

|

binary_op: +

/ \

expr: a expr: b

AST ignores punctuation like commas and semicolons — just structure.

Phase 3: Semantic Analysis

Checks meaning (type checking, scope, etc.).

Example:int a = "hello"; ← Type error, caught here.

Also builds a symbol table:

| Name | Type | Scope |

|---|---|---|

| add | int→int→int | global |

| a | int | param |

| b | int | param |

Phase 4: Intermediate Representation (IR) Generation

AST → lower-level, machine-independent IR (e.g., three-address code).

text

t1 = a + b return t1

Or in LLVM IR style:

text

%result = add i32 %a, %b ret i32 %result

IR is easier to optimize than raw AST.

Phase 5: Optimization

Optimize IR without knowing the target CPU.

Example improvements:

- Constant folding:

x = 3 + 5→x = 8 - Dead code elimination: remove

if (false) { ... } - Inline small functions: replace

add(a,b)witha+b

Phase 6: Code Generation

IR → assembly / machine code for a specific CPU (x86, ARM, etc.).

add(a, b) on x86-64 might become:

asm

lea eax, [rdi + rsi] ; rdi = a, rsi = b, eax = result ret

Then assembler + linker produce executable binary.

3. Visual Pipeline Summary (Text Diagram)

text

Source File

│

▼

┌─────────────┐

│ Lexer │ ──► token stream

└─────────────┘

│

▼

┌─────────────┐

│ Parser │ ──► AST

└─────────────┘

│

▼

┌─────────────┐

│ Semantic │ ──► annotated AST + symbol table

│ Analyzer │

└─────────────┘

│

▼

┌─────────────┐

│ IR Gen │ ──► IR (three-address code)

└─────────────┘

│

▼

┌─────────────┐

│ Optimizer │ ──► optimized IR

└─────────────┘

│

▼

┌─────────────┐

│ Code Gen │ ──► assembly / machine code

└─────────────┘

│

▼

Executable

4. Real Compiler Examples

| Compiler | Language | Phases combined |

|---|---|---|

| GCC | C/C++ | 6+ passes, many optimizations |

| Clang/LLVM | C/C++/Rust | clean separation: frontend (Clang) → IR → backend (LLVM) |

| javac | Java | source → bytecode (JVM IR) |

5. Key Takeaway

A compiler is not magic — it’s a deterministic pipeline:

- Lexer breaks text into tokens.

- Parser builds a tree (AST).

- Semantic validates types and scopes.

- IR flattens to lower-level ops.

- Optimizer improves IR.

- Code generator emits machine code.