OS Concepts You’ll Use in Every Job: Processes, Threads, and Mutexes

The Core Trio: Processes, Threads, Mutexes

text

┌─────────────────────────────────────────────────────────┐ │ YOUR PROGRAM │ │ │ │ ┌─────────────┐ spawns ┌─────────────┐ │ │ │ PROCESS │ ─────────────► │ THREADS │ │ │ │ (heavy) │ │ (light) │ │ │ └─────────────┘ └──────┬──────┘ │ │ │ │ │ needs sync │ │ │ │ │ ┌──────▼──────┐ │ │ │ MUTEX │ │ │ │ (lock) │ │ │ └─────────────┘ │ └─────────────────────────────────────────────────────────┘

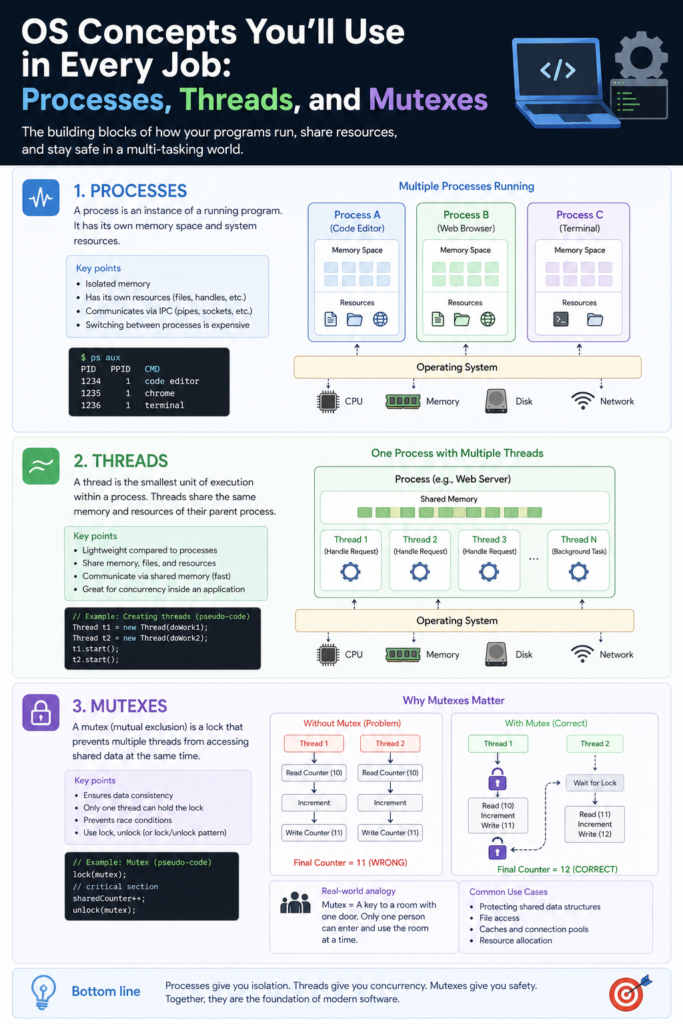

1. Process: The “Running Program”

Mental model: A house.

- Has its own address space (rooms)

- Doesn’t share memory with other houses

- Expensive to build (fork) or move (context switch)

- Dies = house demolished

When you use it (daily):

- Running

node server.js→ one process pg_ctl start→ PostgreSQL process- Every terminal tab = separate shell process

Key commands you’ll actually type:

bash

ps aux # list all processes kill -9 1234 # force kill process 1234 top # see CPU/memory per process

What you need to know (not trivia):

- Processes isolate failures — one crash doesn’t kill others

- Inter-process communication (IPC) is slow (pipes, sockets, files)

- Chrome uses one process per tab for this reason

2. Thread: The “Unit of Execution”

Mental model: Workers inside one house.

- Same house (memory), different workers

- Cheap to create and switch

- Can step on each other’s toes (shared data)

When you use it (daily — probably without realizing):

Web server handling requests:

python

# Flask/FastAPI (each request gets a thread)

@app.get("/user")

def get_user(): # runs in SOME thread

return {"id": 1} # which thread? doesn't matter

Background task in GUI:

javascript

// Browser main thread (UI) vs Web Worker

setTimeout(() => {

// This runs in main thread unless you use Worker

alert("still responsive");

}, 1000);

Parallel processing:

python

from threading import Thread

def download(url):

print(f"downloading {url}")

# 4 threads downloading simultaneously

threads = [Thread(target=download, args=(url,)) for url in urls]

for t in threads: t.start()

The real catch: Threads share memory → race conditions.

3. Mutex (Mutual Exclusion Lock)

Mental model: Bathroom key.

- Only one thread holds the key at a time

- Others wait outside

- Forgetting to return the key = deadlock

Problem it solves:

python

# BAD: Two threads incrementing balance

balance = 100

def add_money():

global balance

temp = balance # Thread A reads 100

temp = temp + 10 # Thread B also reads 100 (before A writes)

balance = temp # Both write 110, lost 10!

Solution with mutex:

python

from threading import Lock

balance = 100

lock = Lock()

def add_money():

global balance

lock.acquire() # Get the key

temp = balance

temp = temp + 10

balance = temp

lock.release() # Return the key

Real-world mutex you’ve seen:

- Database row lock:

SELECT ... FOR UPDATE - Git lock file:

.git/index.lock - File lock:

flockin shell scripts

The Three Rules You’ll Live By

Rule 1: Keep shared state minimal

python

# BAD: Shared counter

counter = 0

def worker():

global counter

counter += 1 # needs mutex EVERYWHERE

# GOOD: Local to thread

def worker():

local_counter = 0 # no lock needed

Rule 2: Always acquire locks in the same order

python

# DEADLOCK waiting: Thread A: lock(a) → lock(b) Thread B: lock(b) → lock(a) # B waits for A, A waits for B 💀 # FIX: Same order everywhere Thread A: lock(a) → lock(b) Thread B: lock(a) → lock(b) # works

Rule 3: Use higher-level abstractions when possible

Instead of raw mutexes:

python

# Use queues from queue import Queue q = Queue() q.put(data) # thread-safe internally data = q.get() # Use atomic operations counter = AtomicInteger(0) # Java/C++ counter.increment() # no explicit lock # Use database transactions BEGIN; UPDATE accounts SET balance = balance - 100 WHERE id = 1; UPDATE accounts SET balance = balance + 100 WHERE id = 2; COMMIT; # DB handles locking

Real Job Scenarios

Scenario 1: Backend API rate limiter

python

# Need to track requests per user across threads

requests_per_user = {} # shared dict

mutex = Lock()

def rate_limit(user_id):

with mutex:

count = requests_per_user.get(user_id, 0)

if count > 100:

return False

requests_per_user[user_id] = count + 1

return True

Scenario 2: Loading cache in background

python

cache = {}

cache_lock = Lock()

def get_data(key):

# Fast path: no lock for reading

if key in cache:

return cache[key]

# Slow path: lock only for update

with cache_lock:

if key not in cache: # double-check

cache[key] = fetch_from_db(key)

return cache[key]

Scenario 3: Logging from multiple threads

python

# Without mutex: lines get scrambled

# "2024-01-01 ER2024-01-01 INFO ROR"

log_lock = Lock()

def log(message):

with log_lock:

print(f"{datetime.now()} {message}")

f.write(...) # file write also needs lock

Visual Cheatsheet

text

┌────────────────────────────────────────────────────────────┐

│ PROCESS │

│ ┌────────────────────────────────────────────────────┐ │

│ │ MEMORY (heap, stack, code, static data) │ │

│ │ │ │

│ │ ┌────────┐ ┌────────┐ ┌────────┐ │ │

│ │ │THREAD 1│ │THREAD 2│ │THREAD 3│ │ │

│ │ │ │ │ │ │ │ │ │

│ │ │ stack │ │ stack │ │ stack │ │ │

│ │ │ regs │ │ regs │ │ regs │ │ │

│ │ └───┬────┘ └───┬────┘ └───┬────┘ │ │

│ │ │ │ │ │ │

│ │ └───────────┼───────────┘ │ │

│ │ │ │ │

│ │ ┌──────▼──────┐ │ │

│ │ │ MUTEX │ ← only one thread │ │

│ │ │ (lock) │ inside at once │ │

│ │ └──────┬──────┘ │ │

│ │ │ │ │

│ │ ┌──────▼──────┐ │ │

│ │ │ SHARED DATA │ │ │

│ │ │ { balance, │ │ │

│ │ │ cache, │ │ │

│ │ │ users } │ │ │

│ │ └─────────────┘ │ │

│ └────────────────────────────────────────────────────┘ │

└────────────────────────────────────────────────────────────┘

What You Actually Need to Remember

| Concept | Daily use | Don’t overthink |

|---|---|---|

| Process | Running services, ps, kill | Memory isolation, slow to create |

| Thread | Web servers, background tasks | Shared memory, race conditions |

| Mutex | Any shared counter/cache | Lock order, deadlocks |

The 80/20 rule:

- 80% of bugs come from shared state without mutexes

- 20% come from deadlocks (wrong lock order)

Your debugging toolkit:

bash

# See threads inside a process ps -T -p <PID> top -H -p <PID> # Python: find deadlock import faulthandler; faulthandler.enable() # Kill -3 <PID> prints all threads and locks # Common Java deadlock detection jstack <PID>

Final Takeaway

You don’t need to implement schedulers or memory managers. You just need to remember:

- Processes = separate houses (expensive, isolated)

- Threads = workers in same house (cheap, shared)

- Mutex = bathroom key (only one at a time)